Can We Trust AI If We Can’t Explain It?

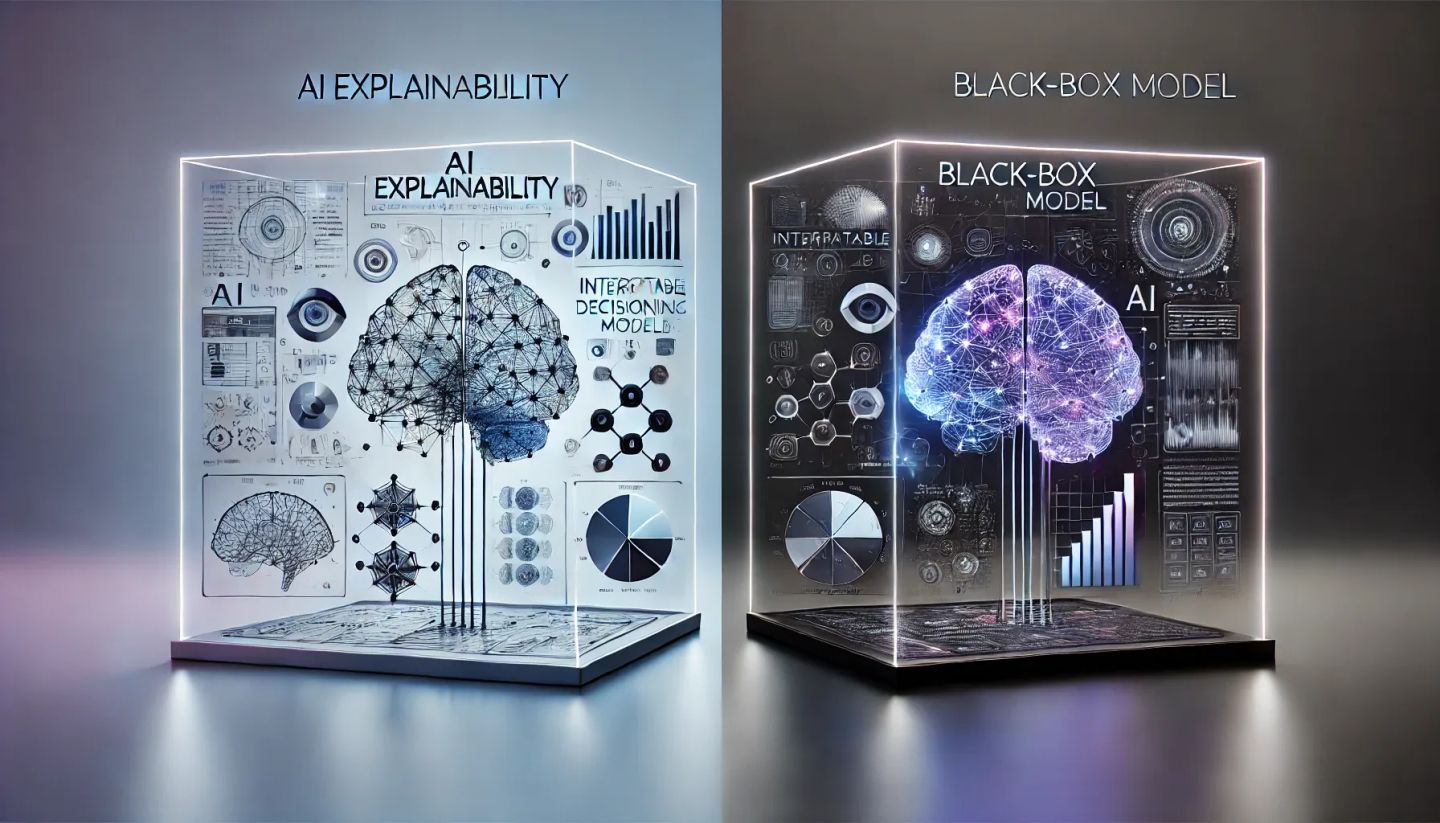

AI is making life-altering decisions—from approving loans and diagnosing diseases to influencing hiring and criminal sentencing. But how do we ensure these decisions are fair, unbiased, and accountable when many AI models operate as black boxes, making predictions with little to no transparency?

The explainability vs. black-box AI dilemma is at the heart of ethical AI governance. Regulatory frameworks like the EU AI Act and NIST AI Risk Management Framework stress the need for AI systems to be transparent, accountable, and explainable—but at what cost?

Are explainable models sacrificing performance, and do black-box models inherently lack accountability? Let’s break down this ethical challenge and explore how businesses can navigate the trade-off between AI accuracy and transparency.

1. What Are Black-Box AI Models?

A black-box AI model is an algorithm that makes decisions in a way that is not easily interpretable by humans. While these models (e.g., deep learning neural networks) can deliver highly accurate predictions, their decision-making process is often opaque.

Why Do Companies Use Black-Box AI?

🔹 Higher accuracy in complex tasks (e.g., fraud detection, medical diagnosis).

🔹 Ability to learn hidden patterns that simpler models might miss.

🔹 Scalability for massive datasets in industries like finance and cybersecurity.

The Risks of Black-Box AI

⚠️ Lack of accountability – Who takes responsibility for AI errors?

⚠️ Regulatory non-compliance – The EU AI Act mandates explainability for high-risk AI applications.

⚠️ Bias & unfairness – Undetectable biases in black-box AI can lead to discriminatory outcomes.

🔹 Example: A U.S. healthcare AI system used to allocate medical resources prioritized white patients over Black patients, reflecting hidden biases in its training data.

2. AI Explainability: Making AI Decisions Transparent

Explainability, or XAI (Explainable AI), refers to AI models designed to be interpretable and understandable by humans. The goal is to ensure AI-generated decisions can be examined, justified, and trusted.

Why Explainability Matters

✅ Regulatory Compliance – AI laws like the EU AI Act and NIST AI RMF demand transparency in AI-driven decisions.

✅ User Trust – Customers, regulators, and stakeholders expect AI to be fair and understandable.

✅ Bias Detection – Transparent models help organizations identify and mitigate biases in AI decision-making.

🔹 Example: In 2018, an AI-based hiring tool was found to favor male candidates due to biased training data. An explainable AI approach could have detected this issue earlier.

Challenges of Explainable AI

🔹 Trade-offs in performance – Simple, interpretable models might be less accurate than deep learning models.

🔹 Scalability issues – Making AI explainable can increase processing time and costs.

🔹 Defining explainability – Different industries have different levels of AI transparency needs.

3. Finding the Right Balance: Explainability vs. Performance

Organizations must strike a balance between AI performance and explainability, depending on the risk level and application.

How to Decide Between Explainable AI and Black-Box AI

🔹 For high-risk decisions (e.g., healthcare, finance, criminal justice): Use interpretable models with clear decision-making logic.

🔹 For low-risk applications (e.g., product recommendations): Black-box AI with human oversight may be acceptable.

🔹 For AI-driven automation (e.g., fraud detection, cybersecurity): A hybrid approach with explainability overlays (e.g., LIME, SHAP) can help.

🔹 Example: Banks use black-box AI for fraud detection, but when a transaction is flagged as fraudulent, a human-in-the-loop system reviews it for final approval.

4. Strategies for Ethical AI Governance

To ensure AI is both high-performing and accountable, businesses should adopt AI governance frameworks and best practices.

Best Practices for Balancing Explainability & Performance

✅ Use AI Explainability Tools – Leverage SHAP, LIME, and other interpretability frameworks.

✅ Adopt Risk-Based AI Governance – High-risk AI applications must prioritize transparency and fairness.

✅ Implement AI Ethics Committees – Establish internal oversight for AI decision-making.

✅ Ensure Regulatory Compliance – Align AI practices with GDPR, EU AI Act, and ISO 42001.

✅ Incorporate Human Oversight – Hybrid AI systems should allow human intervention for critical decisions.

5. Future Trends: The Push for AI Transparency

🔹 AI Regulations Will Continue to Expand – The EU AI Act sets global standards for AI explainability.

🔹 Advancements in XAI – More companies are investing in self-explaining AI models.

🔹 Consumer Demand for AI Transparency – Businesses that embrace ethical AI will gain a competitive advantage.

🔹 Example: Major tech firms are now integrating XAI techniques into AI chatbots to make responses more transparent and avoid misinformation.

Final Thoughts: AI Must Be Ethical, Accountable, and Explainable

As AI continues to evolve, businesses must decide when to prioritize transparency over complexity. AI explainability is not just a technical challenge—it’s an ethical necessity.

Regulations are tightening, and public trust in AI depends on clear, fair, and accountable AI systems. The future belongs to companies that embrace responsible AI governance and balance innovation with ethics.