Most organizations today do not have an AI model problem.

They have an AI deployment confidence problem.

AI systems are moving into production faster than organizations can confidently approve, monitor, and defend them. Across enterprises, governments, and regulated industries, the challenge is no longer whether AI can create value. The challenge is whether organizations can prove that an AI system is safe, controlled, accountable, and ready for real-world use.

This is where a critical gap is emerging between AI governance and AI assurance.

And increasingly, that gap is being filled by something much more operational:

AI Readiness.

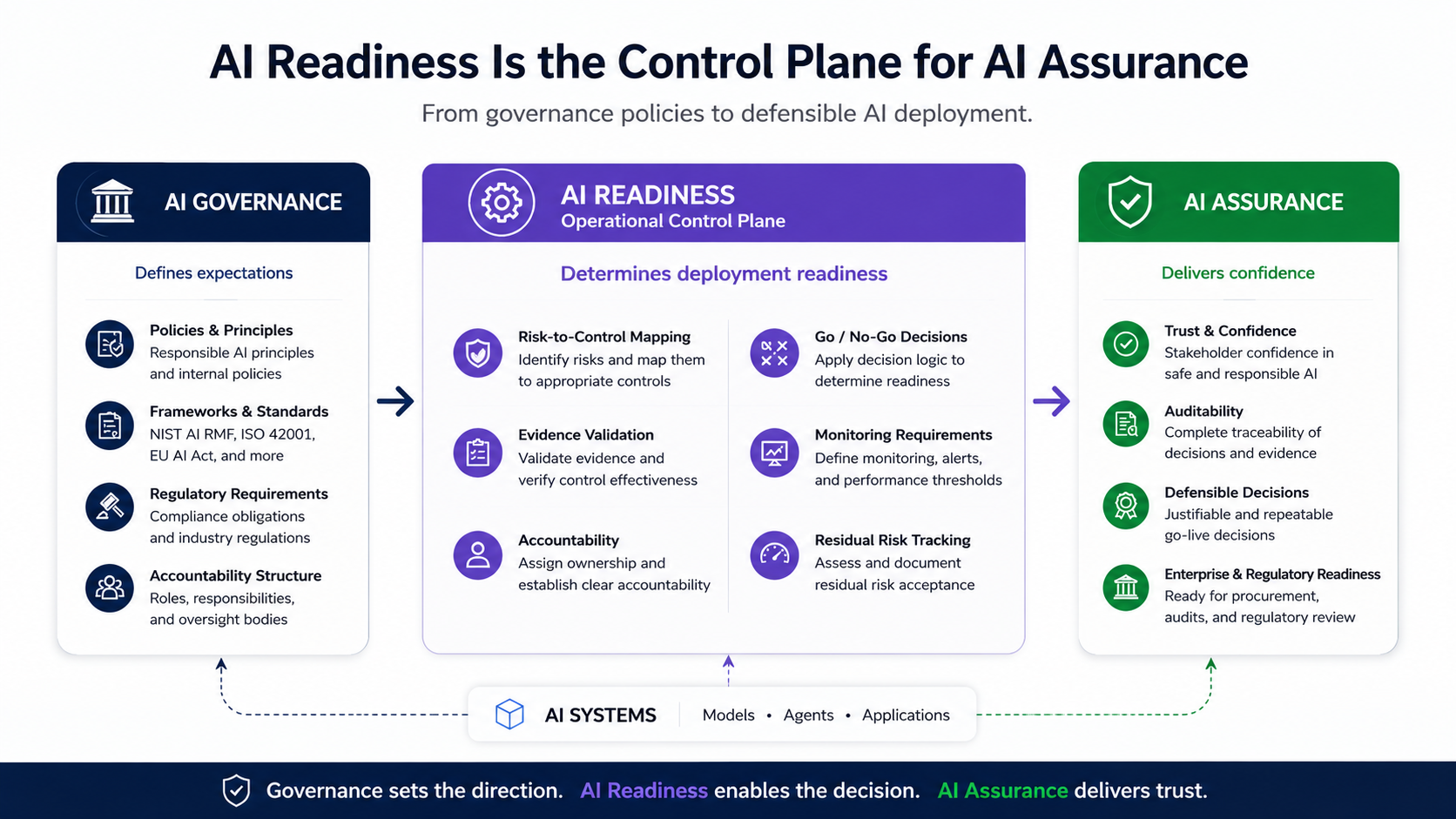

Governance Defines Expectations. Readiness Determines Deployment.

Over the last two years, organizations have invested heavily in:

- Responsible AI principles

- AI governance committees

- AI policies

- Risk frameworks

- Regulatory mapping

- Compliance programs

Frameworks like NIST AI RMF, ISO 42001, and the EU AI Act have helped organizations establish a common language for AI oversight.

But in practice, many organizations still struggle when one simple question appears:

“Can this AI system safely go live right now?”

That is where traditional governance approaches often begin to break down.

Policies describe intent.

Risk registers identify concerns.

Governance frameworks define obligations.

But none of these actually produce a deployment decision.

And when no operational decision layer exists:

- approvals become informal

- accountability becomes fragmented

- evidence is gathered reactively

- teams operate with conflicting assumptions

- enterprise procurement stalls

- audits become painful

- executives inherit unclear risk

The result is familiar across industries:

AI systems ship faster than organizations can confidently approve them.

The Missing Layer in Enterprise AI

Most enterprises already have:

- security governance

- privacy governance

- compliance governance

- risk management processes

Yet AI introduces something fundamentally different.

AI systems:

- evolve over time

- degrade silently

- influence human judgment

- generate probabilistic outputs

- operate across workflows and business functions

- create difficult-to-predict failure modes

This means governance alone is insufficient.

Organizations need a system that operationalizes governance into deployment decisions.

This is the role of AI Readiness.

What Is AI Readiness?

AI Readiness is the operational decision layer between AI governance and AI deployment.

It answers one high-stakes question:

“Is this AI system safe, appropriate, and defensible for production use today?”

Importantly, AI readiness is not:

- a maturity score

- a questionnaire

- a policy checklist

- a one-time ethics review

- a generic risk assessment

It is a structured, evidence-based deployment decision.

AI readiness evaluates whether:

- controls actually exist

- evidence is credible

- safeguards are implemented

- accountability is assigned

- monitoring is operational

- residual risks are understood

- deployment is defensible

In other words:

AI Readiness converts AI governance into operational reality.

A Simple Example

Consider a customer-support AI chatbot deployed by a bank.

Traditional governance may confirm:

- the organization has Responsible AI policies

- privacy standards exist

- AI risks are documented

- legal teams reviewed the initiative

But those activities still do not answer:

- Has the chatbot been tested for hallucinations?

- What happens if it gives financial misinformation?

- Who owns monitoring after deployment?

- How are unsafe outputs escalated?

- What controls prevent exposure of customer data?

- What evidence proves safeguards actually work?

This is where AI Readiness becomes critical.

An AI Readiness process would evaluate:

- hallucination testing results

- human escalation workflows

- monitoring and alerting mechanisms

- customer data controls

- named operational accountability

- deployment boundaries

- residual risk acceptance

Only then can a defensible go-live decision be made.

That is the difference between governance documentation and deployment readiness.

Why AI Readiness Functions Like a Control Plane

The term “control plane” is widely used in cloud and security architecture.

A control plane coordinates:

- policies

- controls

- enforcement

- visibility

- orchestration

- operational decisions

That is exactly what AI readiness does for AI deployment.

An AI Readiness control plane:

- standardizes go-live decisions

- validates deployment evidence

- maps risks to controls

- enforces accountability

- evaluates residual risk

- coordinates human oversight

- maintains deployment traceability

- supports audit and assurance workflows

Without this layer, organizations rely on fragmented approvals and subjective judgment.

With it, AI deployment becomes:

- repeatable

- measurable

- auditable

- scalable

- defensible

Another Real-World Example

Imagine a healthcare organization deploying an AI clinical decision-support tool.

The AI helps clinicians prioritize patients based on risk scores.

The organization may already have:

- healthcare compliance programs

- security controls

- ethics policies

- governance committees

But AI readiness asks operational questions such as:

- Has the model been validated against representative patient groups?

- What bias exists across demographics?

- Can clinicians override AI recommendations?

- What happens if the model drifts over time?

- Who owns incident escalation?

- What evidence supports deployment approval?

The answers to these questions determine whether the AI is actually ready for clinical use.

Because ultimately:

- governance defines expectations

- readiness determines deployment

- assurance creates trust

AI Assurance Is the Outcome

AI assurance is often misunderstood as compliance documentation or post-deployment auditing.

But assurance is fundamentally about confidence.

Can stakeholders trust that:

- the AI behaves as intended

- risks are appropriately controlled

- monitoring exists

- governance decisions are operationalized

- accountability is explicit

- evidence supports deployment

AI assurance is not created by policy alone.

It is created when organizations can demonstrate operational control over AI systems.

This is why:

AI Readiness is the control plane that enables AI Assurance.

Governance defines expectations.

Readiness operationalizes controls.

Assurance provides trust and defensibility.

The Shift From AI Governance to AI Deployment Assurance

A major transition is now happening across the enterprise AI market.

Organizations are moving from:

“Do we have AI governance?”

to:

“Can we prove this AI is ready for production?”

That shift changes everything.

It changes how:

- procurement evaluates vendors

- regulators assess accountability

- boards oversee AI risk

- customers evaluate trust

- enterprises scale AI adoption

Increasingly, organizations do not just want AI policies.

They want:

- evidence

- deployment accountability

- operational assurance

- explicit go/no-go decisions

- continuous monitoring

- defensible approval records

In other words:

they want AI readiness.

Why This Matters Commercially

The organizations that solve AI readiness well will deploy AI faster and more confidently than those that do not.

Because operational trust becomes a competitive advantage.

AI readiness helps organizations:

- accelerate deployment decisions

- reduce procurement friction

- shorten enterprise sales cycles

- avoid last-minute remediation

- improve audit readiness

- reduce regulatory exposure

- establish executive confidence

- scale AI governance operationally

For example, enterprise buyers increasingly ask vendors:

- How was this AI validated?

- What safeguards exist?

- What monitoring is in place?

- Who approved deployment?

- Can you provide evidence of readiness?

Organizations that can answer these questions clearly gain a significant commercial advantage.

The companies that can demonstrate readiness and assurance will increasingly become preferred enterprise vendors.

Not because their models are necessarily better.

But because their deployments are more trustworthy.

The Future of Responsible AI Is Operational

The next phase of AI governance will not be driven by more policy documents.

It will be driven by operational evidence.

The future belongs to organizations that can answer, clearly and defensibly:

- What does this AI system do?

- Who owns accountability?

- What safeguards exist?

- What evidence supports deployment?

- How is the system monitored?

- What residual risks remain?

- Why was this deployment approved?

Those are not theoretical governance questions.

They are operational readiness questions.

And increasingly, they are becoming the foundation of AI assurance itself.

Final Thought

The AI industry does not have a shortage of governance frameworks.

It has a shortage of deployment confidence.

AI Readiness closes that gap.

Because ultimately, every AI system reaches the same moment:

Should this AI go live — yes or no?

Governance alone cannot answer that question.

AI Readiness can.