Imagine an AI tells you that you can't get the loan you urgently need, or worse, a hospital AI mistakenly clears you as healthy. AI isn't some distant future anymore—it’s right here, embedded in our daily lives, influencing how we handle finances, healthcare, education, shopping, and even public safety.

To ensure these powerful tools benefit rather than harm us, Australia is stepping in with mandatory guardrails to guide high-risk AI use. But what's this really mean for tech creators, startups, businesses, and everyday Aussies?

High-Risk AI: Why It Matters

When we say high-risk AI, we don’t mean pesky autocorrect mishaps. We’re talking about tech decisions that can seriously alter lives:

- Finance: AI assessing loan approvals or trading stocks. One glitch could mean financial disaster.

- Healthcare: AI tools diagnosing illnesses or managing medical records. Mistakes here could endanger lives.

- Public Safety: AI used in surveillance or predictive policing. Errors here could harm privacy or wrongly accuse someone.

- Education & Retail: AI determining school admissions or personalized shopping experiences. Biases or data breaches could hurt individuals unfairly.

As AI’s reach expands, so do public concerns around fairness, accuracy, privacy, and how data is used and protected.

Why Guardrails are a Must, Not a Maybe

Australian regulators aren't just recommending good practices—they’re mandating them. A recent report by the Australian Securities and Investments Commission (ASIC), “Beware the Gap,” showed a troubling mismatch between AI adoption and governance readiness. Similarly, the Australian Prudential Regulation Authority (APRA) highlights the need for better oversight in banking and insurance, especially regarding AI-driven data management. Additionally, recent Australian Human Rights Commission research underscores risks like algorithmic bias, pushing the case for strict regulations to protect Australians' rights and privacy.

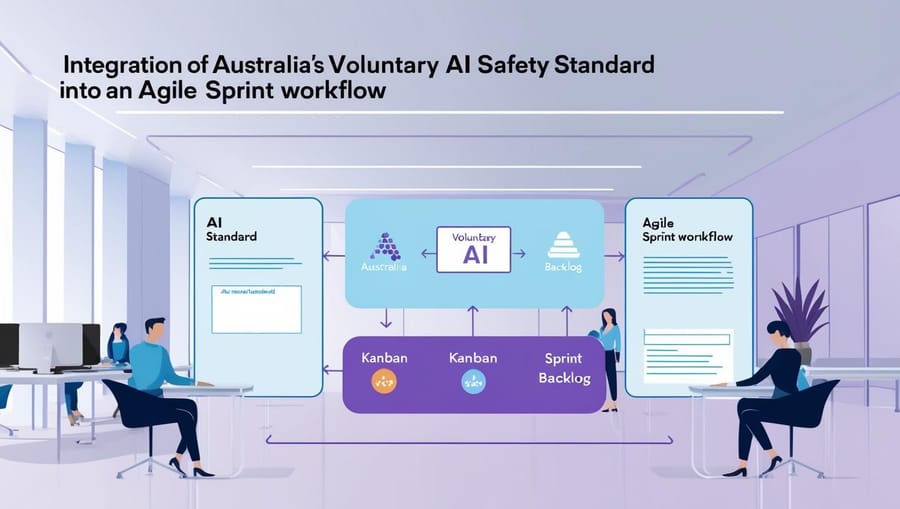

These guardrails mean:

- Doing Your Homework: Thorough risk checks before AI deployment.

- Clear Answers: Making AI’s decisions understandable, not hidden in a mysterious "black box."

- Constant Watch: Regular monitoring to catch and fix problems early.

- Accountability: Knowing exactly who’s responsible if something goes wrong.

- Protecting Data: Strong rules to ensure personal data stays secure and private.

These steps align Australia with global benchmarks like the EU AI Act, the NIST guidelines, and healthcare standards from Australia's Therapeutic Goods Administration (TGA).

Real-Life Lessons: AI’s Hits and Misses

Let’s talk real examples:

- Banking Bias: Imagine a bank's AI rejecting loans for rural communities simply because its data favored city dwellers. Now, Aussie banks are actively addressing this bias through fairness checks.

- Healthcare Errors: An Australian hospital AI incorrectly prioritizes Indigenous patients due to data that ignored their needs, creating delays in essential care. New guardrails require more inclusive data.

- Retail & Startups: A local retail startup sees customer data exposed due to weak AI security measures. New guardrails mean better data protections, keeping both customer trust and the business safe.

- Policing Pitfalls: Facial recognition AI used by police wrongly identifies innocent people. Guardrails demand better transparency and oversight to prevent these errors.

Privacy Matters: Protecting Australians in the AI Era

Privacy isn’t just a buzzword—it’s fundamental. With AI processing huge volumes of sensitive data daily, the stakes have never been higher. Data breaches can lead to identity theft, financial loss, and erosion of trust in critical services. Australian guardrails mandate enhanced data protections, ensuring businesses handle personal data responsibly. These privacy protections safeguard not only individuals but also businesses from costly reputational damage and regulatory penalties.

Startups and large corporations alike need robust privacy practices embedded deeply into their AI systems from the outset—prioritizing privacy isn’t optional; it’s essential for success in the AI age.

Opportunities Ahead: AI Innovation within Safe Boundaries

Guardrails aren’t just about preventing harm—they’re also about creating room for innovation to thrive safely. Clear regulatory frameworks give businesses and startups the confidence to innovate, knowing they’re compliant and protected. Australian startups are already harnessing AI in ways that respect these guardrails, driving breakthroughs in personalized medicine, sustainable finance, and smarter, safer urban environments.

These clear regulations help innovators know exactly where the boundaries are, reducing uncertainty and fostering a competitive yet safe AI landscape.

How to Stay Ahead of the Curve

Australia’s guardrails are just the start. Privacy rules, algorithm fairness, and transparency standards will only get stronger. Here’s how you can adapt:

- Regular AI Check-ups: Continuously audit your AI for biases, data safety, and functionality.

- Be Transparent: Clearly document your AI decisions. Your customers and regulators will thank you.

- Train Your Team: Ensure everyone knows best practices for responsible AI.

- Stay Updated: Keep an eye on changes from ASIC, APRA, and the Office of the Australian Information Commissioner (OAIC).

Building Trust: It’s All About Transparency

Ultimately, these AI guardrails aim to build trust, ensuring technology lifts people up rather than lets them down. By embracing clear, practical rules informed by global and local best practices, Australia is betting on a future where AI serves everyone responsibly.

Ready for the responsible AI revolution? We'd love your thoughts—how are you adapting to these new rules?