HIMSS 2026 (March, Las Vegas) marked a clear turning point in the healthcare AI conversation.

For the past two years, much of the industry’s attention has focused on the “wow factor” of Generative AI—tools capable of summarizing clinical notes, answering patient questions, and generating medical documentation.

This year, the tone was different.

The conversation has shifted from what AI can generate to how AI systems can safely operate within healthcare workflows.

The result is a new phase of AI adoption defined by Agentic AI, operational governance, and continuous oversight.

For organizations deploying AI in clinical environments, the message from HIMSS is clear:

The era of experimental pilots is ending. Operational discipline is beginning.

The Rise of Agentic AI

One of the most widely discussed themes at HIMSS 2026 was the emergence of Agentic AI.

Unlike traditional generative AI systems that produce text or insights, agentic AI systems are designed to execute tasks across enterprise environments.

In healthcare, this means AI that can:

- analyze patient trends

- coordinate follow-up care

- automate revenue cycle processes

- manage claims appeals

- monitor patient safety signals

One of the most notable announcements came from Epic. Epic discussed new capabilities enabling health systems to build and orchestrate AI-driven workflows and agents within the EHR ecosystem.

The platform enables healthcare organizations to build and orchestrate AI agents capable of reasoning over clinical data and triggering operational actions.

For example, an AI agent could monitor longitudinal patient data and flag emerging risks—such as deteriorating blood pressure trends or medication adherence issues—before a clinician manually reviews the record.

Other vendors showcased similar capabilities.

Vendors such as XiFin and Sword Health demonstrated AI-driven automation for revenue cycle management and patient safety monitoring.

Across the HIMSS floor, the message was consistent:

Healthcare AI is evolving from copilots to operational agents.

“We’re moving from copilots to coworkers.”

Continuous Clinical Oversight

Agentic AI introduces a new reality: AI systems degrade over time.

Clinical environments change constantly. Data patterns shift, treatment protocols evolve, and operational workflows adapt.

As a result, regulators and healthcare leaders are increasingly emphasizing the need for continuous oversight of AI systems.

During several HIMSS sessions, officials from HHS and the FDA stressed the importance of monitoring clinical AI tools after deployment.

The industry is beginning to describe this approach as Continuous Clinical Oversight.

In practice, this means:

- monitoring model performance in real time

- detecting model drift or degraded accuracy

- supervising agent behavior across workflows

- implementing governance mechanisms that intervene when anomalies appear

In other words, AI systems must be treated less like static software and more like dynamic infrastructure.

“AI systems don’t fail suddenly—they drift quietly.”

Governance Is Becoming an Operational Capability

Another major shift at HIMSS was the evolution of AI governance from policy to practice.

Until recently, Responsible AI discussions often focused on high-level principles such as fairness, transparency, and accountability.

Healthcare organizations are now asking more practical questions:

- How do we monitor AI systems in production?

- How do we produce audit evidence for regulators?

- How do we prevent unauthorized AI tools from entering clinical workflows?

The AI in Healthcare Forum at HIMSS26 highlighted a growing consensus: credibility now depends on measurable outcomes and governance evidence, not just innovation.

Three governance capabilities are emerging as essential.

Continuous Control Monitoring

Healthcare organizations are shifting away from periodic audits toward continuous monitoring of AI systems.

Live telemetry, performance metrics, and monitoring dashboards allow organizations to detect risks before they become clinical problems.

Evidence-Based Governance

Artifacts such as model cards, evaluation reports, and audit documentation are becoming essential for demonstrating Responsible AI practices.

Health systems increasingly need to show regulators how AI systems are validated, monitored, and updated.

Eliminating Shadow AI

Another concern raised repeatedly at HIMSS is the growth of shadow AI.

Clinicians and staff are experimenting with external AI tools outside formal governance frameworks.

Healthcare organizations are responding by introducing permission frameworks and centralized AI governance platforms to ensure that only vetted systems operate in clinical environments.

“The Wild West phase of AI pilots is ending.”

Human-in-the-Loop Still Matters

Despite the excitement around autonomous systems, healthcare leaders repeatedly emphasized that human oversight remains essential.

Panels featuring CMIOs from Northwestern Medicine and MUSC stressed that AI tools must integrate seamlessly into clinical workflows.

Standalone AI applications often fail because they require clinicians to leave the electronic health record environment.

Successful AI systems embed intelligence directly within the EHR interface, allowing clinicians to review and validate AI outputs without disrupting their workflow.

The goal is not replacing clinicians, but reducing cognitive burden.

Responsible AI Is Becoming Part of the Security Stack

Cybersecurity remained a major theme at HIMSS, and the connection between AI governance and cybersecurity is becoming increasingly clear.

Healthcare organizations already face significant cyber threats, including ransomware attacks targeting hospital systems.

AI introduces additional complexity because these systems require access to:

- sensitive patient data

- enterprise APIs

- operational workflows

Each of these represents a potential vulnerability.

As a result, many organizations are beginning to integrate AI governance directly into their security architecture.

Some discussions described this emerging approach as Zero-Trust AI, where AI systems are treated as privileged actors that require continuous authentication, monitoring, and policy enforcement.

According to HIMSS discussions, 48% of healthcare leaders cite cybersecurity and data privacy as the primary barriers to AI adoption.

“Responsible AI is quickly becoming part of the security stack.”

Regulation Is Catching Up

The regulatory landscape for healthcare AI remains fragmented, but it is becoming increasingly structured as federal agencies begin aligning their approaches to AI oversight.

At the HIMSS/AMDIS Physicians’ Forum, federal officials discussed how regulators are adapting to the rapid evolution of AI technologies in healthcare.

Arman Sharma, Deputy Chief AI Officer and Senior Advisor at the U.S. Department of Health and Human Services (HHS), emphasized that healthcare organizations need clear signals from regulators as AI adoption accelerates across the industry.

Meanwhile, representatives from the Food and Drug Administration (FDA) noted that regulators are exploring how best to oversee AI systems that evolve over time.

Agentic AI presents particular challenges because autonomous systems may adapt their behavior and interact dynamically with healthcare workflows.

One emerging regulatory direction is a hybrid oversight model that combines:

- pre-market evaluation of AI systems

- continuous post-market monitoring of real-world performance

Regulators also highlighted that traditional human oversight alone may not scale as AI systems become more autonomous. Some researchers are therefore exploring the concept of supervisory AI systems that monitor and evaluate deployed AI agents in real time.

Alongside regulatory guidance, many healthcare organizations are aligning their governance programs with emerging frameworks such as:

- NIST AI Risk Management Framework (AI RMF)

- ISO/IEC 42001 AI Management Systems

These frameworks are increasingly serving as operational blueprints for Responsible AI governance in healthcare environments.

Industry Alliances Are Driving Responsible AI

Healthcare AI governance is increasingly being shaped through collaborative industry initiatives rather than isolated efforts by individual vendors or health systems.

One of the most prominent initiatives is the Coalition for Health AI (CHAI), a multi-stakeholder coalition bringing together health systems, technology companies, policymakers, researchers, and patient advocates to establish practical standards for responsible AI deployment in healthcare.

At HIMSS 2026, CHAI CEO Brian Anderson, MD joined leaders from Microsoft, Frederick Health, and MEDITECH for a panel discussion on how ambient and generative AI are transforming documentation, coding workflows, and revenue integrity. The discussion highlighted the growing need for governance frameworks as AI systems move deeper into operational healthcare workflows.

During the conference, CHAI also released its 2025 Impact Report, outlining progress in building consensus standards and governance tools for healthcare AI. The report reflects a growing community of health systems, technology providers, and policymakers working together to define how AI should be evaluated, monitored, and trusted in clinical environments.

As AI moves into increasingly consequential healthcare use cases, initiatives like CHAI are helping establish shared governance practices, validation standards, and accountability mechanisms that allow innovation to scale while preserving patient safety.

Strategic Partnerships Reflect the Push for Trusted AI

Several partnerships announced at HIMSS reinforce the industry’s focus on trusted AI ecosystems.

One notable example is the collaboration between Wolters Kluwer and Microsoft, which integrates UpToDate’s peer-reviewed clinical knowledge directly into Microsoft Copilot and Teams.

The goal is to ensure that generative AI outputs are grounded in validated clinical evidence rather than open web content.

Another development came from athenahealth, which introduced a Model Context Protocol (MCP) server allowing authorized AI agents to securely access patient data with explicit patient consent.

This architecture could become foundational for interoperable AI agents across healthcare platforms.

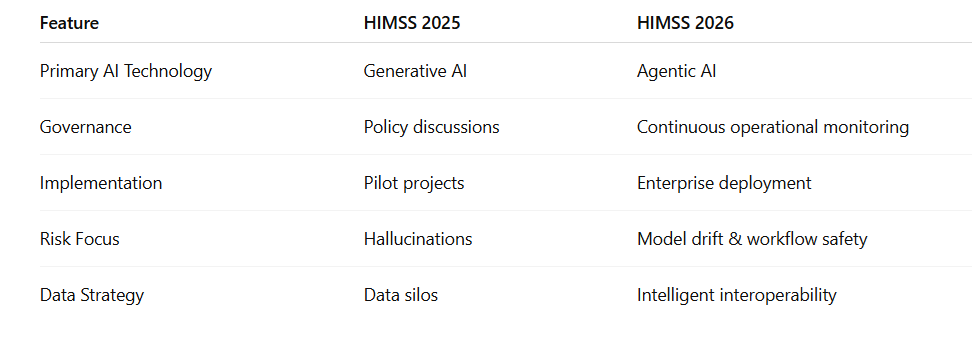

HIMSS 2025 vs HIMSS 2026

The Big Takeaway

HIMSS 2026 revealed a decisive shift in the evolution of healthcare AI.

The industry is moving beyond experimentation with generative AI toward operational systems capable of reasoning, acting, and coordinating complex workflows. AI is no longer confined to copilots and productivity tools—it is increasingly becoming part of the operational fabric of healthcare systems.

As this transformation accelerates, the defining challenge is no longer just technological innovation.

It is governance.

Healthcare organizations must now build the capabilities required to:

- continuously monitor AI systems in production

- govern autonomous agents operating within clinical workflows

- generate audit-ready evidence for compliance and oversight

- maintain trust with clinicians, regulators, and patients

In the years ahead, the success of healthcare AI will not be defined solely by algorithmic breakthroughs or model performance.

It will be defined by whether organizations can build the governance infrastructure required to deploy AI safely, responsibly, and at scale.

Because in healthcare, innovation alone is not enough.

Trust must scale with it.