Explainable AI: Why AI Transparency Matters for Compliance and Trust

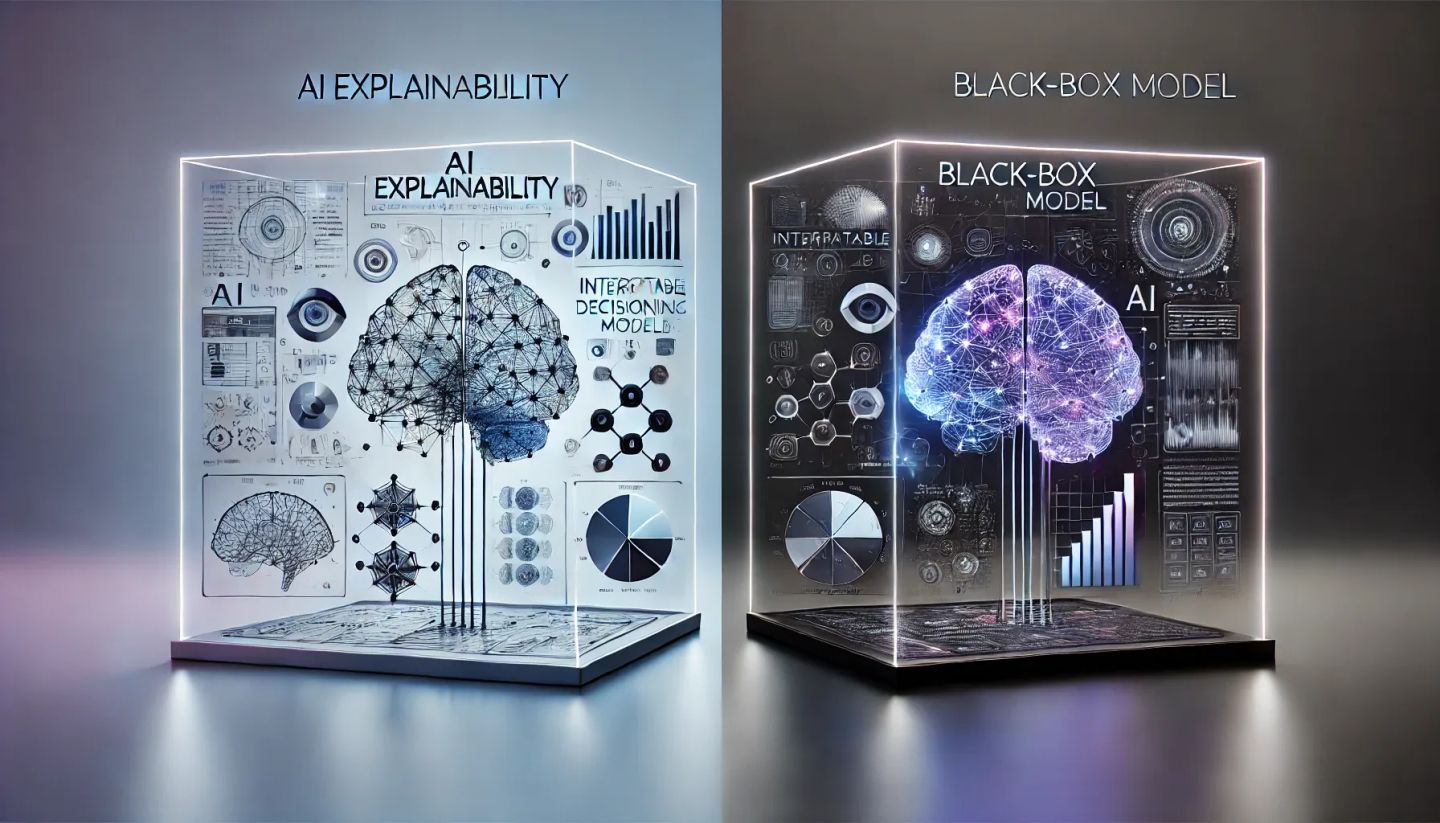

Introduction Why Explainable AI is Critical for Compliance As artificial intelligence adoption grows regulators are demanding greater AI transparency and accountability AI systems make critical decisions in finance healthcare hiring and legal sectors yet many operate as black boxes without clear explanations Regulations such as the EU AI Act GDPR