What is the EU AI Act? A Step-by-Step Compliance Guide

AI is reshaping industries, driving innovation, and transforming business landscapes worldwide. But as artificial intelligence becomes more integrated into our daily lives, risks around privacy, safety, and fairness have surged. Enter the EU AI Act—the world's first comprehensive regulatory framework specifically designed to govern AI technology. Is your organization ready to navigate this new compliance landscape?

Understanding the EU AI Act: What's the Big Deal?

The EU AI Act, proposed by the European Commission, categorizes AI systems according to risk levels—from minimal risk to high-risk and outright banned systems. This risk-based approach helps organizations prioritize compliance efforts, focusing on transparency, accountability, and robust governance practices.

Breaking Down the Compliance Journey

To simplify your path to compliance, let’s break down the process step-by-step:

Step 1: Assessing AI System Risks

Accurately classifying your AI systems based on the EU’s risk framework is foundational for compliance. Here are detailed categories with practical examples and scenarios:

- Minimal Risk: Systems that pose negligible or no risk to individuals or society. Examples include:

- Email spam filters

- Standard analytics tools for internal business insights

- Inventory forecasting systems

- Limited Risk: Systems requiring clear transparency to ensure users are aware they're interacting with AI. Examples include:

- Customer service chatbots on retail or banking websites

- Virtual assistants embedded in consumer electronics

- AI-driven recommendation engines on streaming platforms

- High Risk: AI systems with significant potential impacts on personal safety, fundamental rights, or economic decisions. Rigorous oversight and compliance procedures are essential. Real-world scenarios include:

- Recruitment: AI platforms screening CVs, ranking job applicants, and influencing hiring decisions. Companies must ensure fairness, transparency, and mechanisms to contest decisions.

- Healthcare: AI-powered diagnostic imaging systems used in hospitals, requiring strict validation, transparency of decision logic, and comprehensive risk documentation.

- Finance: AI systems used for credit scoring, determining loan eligibility, or investment management, necessitating transparency in decision-making processes and robust audit trails.

- Transportation: Autonomous driving systems in vehicles, drones for deliveries, or traffic management systems, where failures could directly impact human safety.

- Education: AI systems grading student exams or determining educational access, requiring transparency, fairness audits, and clear recourse mechanisms.

- Banned Systems: Systems considered ethically unacceptable due to their profound negative societal impacts. Examples include:

- Real-time facial recognition for mass surveillance by law enforcement

- Social scoring systems used by governments or institutions to restrict rights or access services based on AI assessments

- AI-enabled manipulation or exploitation of vulnerable individuals

Real-world Example: A fintech startup using AI to automate loan approvals must classify its system as high-risk. It must ensure transparent decision-making criteria, fairness checks to prevent discrimination, detailed documentation of algorithms and data sources, and an auditable decision trail to satisfy regulatory reviews

Step 2: Building Robust Governance & Documentation

Effective AI governance begins with thorough documentation and transparent processes. Organizations must clearly outline their AI systems' technical specifications, operational methodologies, and risk mitigation strategies. Here are practical actions and examples to consider:

- Comprehensive AI Documentation:

- Record AI model architectures, training data sets, and validation procedures clearly and thoroughly.

- Maintain detailed records of algorithm changes, updates, and iterations over time.

- Example: A healthcare provider using AI-driven diagnostics documents every update to its algorithms, including the datasets used, validation results, and improvements made to address biases.

- Transparent Risk Mitigation Strategies:

- Develop and document procedures to identify, assess, and mitigate potential AI-related risks systematically.

- Clearly outline roles and responsibilities for risk management and compliance oversight.

- Example: A financial institution deploying AI for credit scoring systematically records risk scenarios such as data bias or algorithmic drift, alongside specific strategies employed to mitigate these risks.

- Regular Governance Reviews and Updates:

- Conduct periodic internal audits and reviews to ensure documentation aligns with current AI models and regulatory standards.

- Update documentation to reflect regulatory changes or technological advancements promptly.

- Example: An autonomous vehicle manufacturer regularly revisits and revises its AI system documentation following software updates, capturing new safety checks and operational standards required by updated regulatory guidelines.

- Cross-Functional Collaboration:

- Facilitate collaboration across legal, technical, compliance, and business teams to ensure AI governance comprehensively addresses all regulatory, ethical, and operational concerns.

- Example: A large retail company establishes an AI governance committee comprising data scientists, legal advisors, and business unit representatives to oversee documentation accuracy, compliance monitoring, and risk mitigation strategies.

Robust governance and documentation not only support regulatory compliance but also build stakeholder trust, facilitating smoother operations and improved risk management across your organization.

Step 3: Implementing Risk Management Controls

Once AI systems are accurately categorized and documented, organizations must implement proactive risk management controls. Aligning these practices with recognized frameworks (like NIST and ISO) enhances compliance, transparency, and reliability. Here are key steps and practical examples:

- Proactive Risk Assessment & Mitigation:

- Regularly conduct comprehensive risk assessments for AI systems, evaluating potential bias, fairness, privacy concerns, and safety hazards.

- Implement controls such as bias testing, fairness audits, and data integrity checks.

- Example: A recruitment firm using AI-driven candidate screening runs quarterly bias audits, adjusting algorithms and datasets to eliminate discriminatory hiring practices.

- Continuous Monitoring & Auditing:

- Deploy continuous monitoring systems to detect deviations or anomalies in AI system performance.

- Set automated alerts for unusual activity, system failures, or unexpected outcomes to trigger timely interventions.

- Example: An insurance company leveraging AI for claim processing integrates continuous monitoring software, triggering alerts whenever the AI system shows unusual claim approval rates or patterns, ensuring swift corrective actions.

- Incident Response Planning & Management:

- Develop clear procedures for swiftly identifying, responding to, and documenting AI-related incidents, including system malfunctions, data breaches, or ethical violations.

- Clearly assign roles and responsibilities for incident handling to reduce response times and impact.

- Example: A hospital using AI diagnostics maintains a detailed incident response plan, enabling immediate isolation and review of the AI system if an unexpected diagnostic error occurs, thereby safeguarding patient safety and compliance.

- Risk-Based Access & Operational Controls:

- Establish robust access control mechanisms to ensure only authorized personnel interact with sensitive AI systems or datasets.

- Regularly review access logs and permissions to minimize operational risks.

- Example: A fintech startup using AI to automate loan approvals strictly enforces role-based access controls, limiting sensitive algorithm adjustments or data modifications to senior compliance and technical teams, with comprehensive audit trails documenting any access or changes.

Implementing these robust risk management controls helps organizations remain compliant with EU AI Act requirements, minimize risks, and foster stakeholder confidence in AI operations.

Step 4: Ensuring Transparency & Explainability

Transparency and explainability are cornerstones of AI compliance under the EU AI Act. Organizations must clearly communicate how their AI systems operate, make decisions, and interact with users, regulators, and stakeholders. Practical approaches include:

- Transparent Communication Practices:

- Clearly inform users whenever they interact with AI systems, including the nature and purpose of the AI's involvement.

- Provide easy-to-understand disclosures about how user data is processed by AI.

- Example: An online retail platform explicitly informs customers when personalized product recommendations are AI-generated, detailing the data used (such as browsing history or previous purchases).

- Explainable AI (XAI):

- Implement technologies that provide clear explanations for AI-driven decisions, enabling users and regulators to understand how conclusions are reached.

- Prioritize transparency in sensitive areas like healthcare, finance, and employment decisions.

- Example: A banking institution utilizing AI for loan approvals provides applicants with concise explanations outlining the specific reasons behind loan acceptance or rejection, including factors such as credit history, income verification, and algorithmic weighting.

- Audit Trails and Documentation:

- Maintain thorough documentation and audit trails that track AI decision-making processes from input data through to outcomes.

- Ensure these records are accessible for regulatory review, internal audits, and dispute resolution.

- Example: An insurance provider employs detailed logging systems for its AI-powered claim assessments, documenting each decision step, enabling both transparency during regulatory audits and improved internal oversight.

- Feedback and Redress Mechanisms:

- Establish clear procedures for users to provide feedback, ask questions, or challenge AI-driven decisions.

- Ensure feedback processes are accessible, timely, and responsive.

- Example: An HR tech company using AI for talent assessment establishes a structured appeal process, allowing candidates to question or dispute automated hiring decisions, ensuring fairness and trust.

By embedding transparency and explainability into AI operations, organizations not only comply with regulatory demands but also build lasting trust among customers, partners, and regulators.

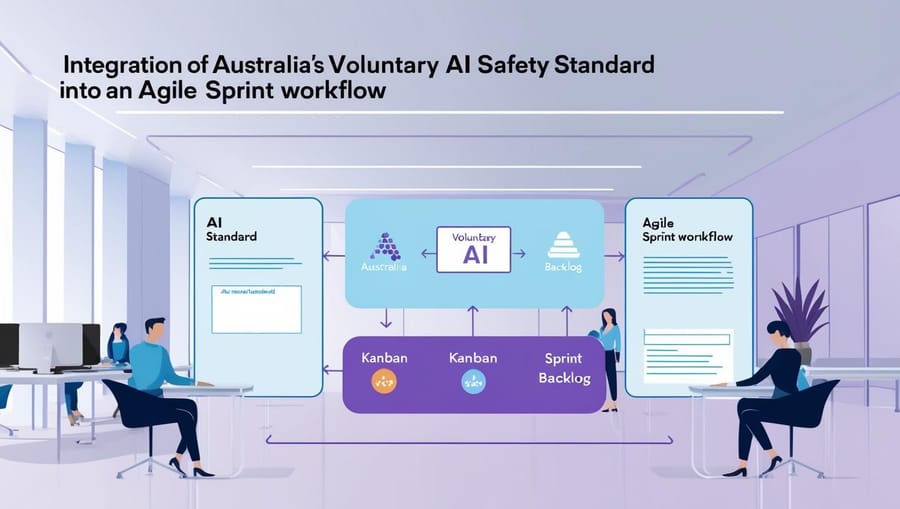

Step 5: Ongoing Compliance & Continuous Improvement

Compliance with the EU AI Act isn't a one-time event—it's an ongoing journey requiring continuous monitoring, refinement, and adaptation. Organizations must embed continuous improvement into their AI governance practices through regular audits, responsive updates, and proactive engagement with emerging standards. Here are essential practices and practical examples:

- Continuous Monitoring and Reporting:

- Regularly track AI systems for compliance with established benchmarks and regulatory criteria.

- Leverage AI governance software platforms for automated compliance monitoring and real-time risk alerts.

- Example: A financial services firm integrates an AI governance platform that continuously monitors algorithmic trading systems, providing instant notifications if compliance metrics deviate from regulatory thresholds.

- Periodic AI System Audits and Reviews:

- Schedule periodic internal and external audits to proactively identify compliance gaps, performance issues, or emerging risks.

- Engage third-party auditors periodically to ensure objectivity and thorough oversight.

- Example: A healthcare organization deploying AI-powered diagnostics schedules biannual external audits to validate clinical accuracy, data privacy compliance, and fairness benchmarks, proactively addressing issues before regulatory reviews.

- Adaptive AI Governance Framework:

- Regularly update governance frameworks and documentation to reflect evolving regulations, technology advancements, and stakeholder feedback.

- Create feedback loops to incorporate regulatory changes swiftly and efficiently.

- Example: An e-commerce company regularly revises its AI governance framework to align with emerging EU AI Act interpretations, promptly adjusting transparency standards for product recommendation algorithms based on new regulatory guidance.

- Training & Awareness Programs:

- Conduct ongoing training for staff involved in developing, operating, or overseeing AI systems to ensure continuous awareness of compliance obligations.

- Provide resources and updates reflecting the latest AI governance practices and regulatory trends.

- Example: A global tech firm establishes quarterly AI compliance workshops, ensuring that development teams, product managers, and compliance officers remain updated on the EU AI Act requirements and best practices in risk management.

Embedding ongoing compliance and continuous improvement into your organization's culture ensures sustained alignment with the EU AI Act and positions your organization to adapt proactively to future regulatory challenges.

Emerging Trends: What’s Next for AI Governance?

As AI continues to evolve, so does the landscape of AI governance. Organizations that stay ahead of emerging trends can proactively manage compliance, mitigate risks, and drive innovation. Here are key trends shaping the future of AI governance:

- Automation of AI Compliance:

- AI governance platforms now offer automated compliance tracking, continuous risk assessment, and real-time reporting, significantly streamlining regulatory adherence.

- Example: Financial institutions leverage automated compliance dashboards, continuously assessing AI model performance and risk indicators against standards such as the EU AI Act and NIST frameworks.

- AI Ethics and Responsible AI Initiatives:

- Organizations increasingly integrate ethical considerations and social responsibility into AI governance frameworks, prioritizing fairness, transparency, and societal impact.

- Example: Tech companies are forming independent ethics boards and implementing AI fairness toolkits to systematically identify and address bias in AI systems, especially in sensitive domains like HR and healthcare.

- Cross-border Regulatory Alignment:

- Global enterprises are preparing for increased harmonization of AI regulations across jurisdictions, aligning EU AI Act compliance efforts with frameworks like NIST and ISO standards to streamline global governance.

- Example: Multinational healthcare providers align their AI diagnostic solutions to comply simultaneously with EU AI Act, U.S. FDA regulations, and international ISO standards, ensuring seamless global deployment.

- Expansion of Explainable AI (XAI):

- Explainability will increasingly become a mandatory feature for high-risk AI systems, driving wider adoption of XAI technologies that improve transparency and user trust.

- Example: Insurance companies and banks are adopting explainability frameworks, ensuring their AI-driven decisions in lending or claims processing are clear, understandable, and defensible to customers and regulators.

- Enhanced Incident Response and Audit Capabilities:

- Organizations are bolstering their AI incident response plans and audit capabilities, adopting proactive risk detection methods such as real-time anomaly detection and automated incident reporting.

- Example: Autonomous vehicle manufacturers implement AI-driven incident detection systems that automatically alert response teams when anomalies occur, significantly enhancing safety and regulatory compliance.

By closely tracking and proactively responding to these emerging trends, organizations can turn AI governance from a compliance obligation into a competitive advantage, driving innovation while maintaining trust and compliance in an increasingly regulated AI environment.

Key Takeaways:

| Compliance Step | Key Actions | Practical Examples |

|---|---|---|

| Step 1: Assess AI System Risks | Classify AI systems based on risk: Minimal, Limited, High, Banned | AI-driven hiring tools (High risk), Chatbots (Limited risk) |

| Step 2: Governance & Documentation | Maintain detailed AI system documentation and risk management strategies | Documenting algorithm versions, bias audits for AI hiring systems |

| Step 3: Risk Management Controls | Continuous monitoring, regular audits, robust incident response planning | Real-time compliance dashboards in financial institutions |

| Step 4: Transparency & Explainability | Clearly communicate AI involvement, implement explainable AI (XAI), maintain audit trails | Explainable loan decisions in banking, XAI in medical diagnostics |

| Step 5: Ongoing Compliance | Regular system audits, adaptive governance frameworks, ongoing training | Biannual audits of AI in healthcare, quarterly AI compliance workshops |

| Emerging Trends | AI compliance automation, responsible AI ethics, global regulatory alignment | Automated AI governance tools in finance, multinational alignment of regulations |

Stay Ahead of the Curve

Navigating the EU AI Act might seem challenging, but proactive compliance positions your organization for sustained innovation and reduced regulatory risk.