The clock is ticking on AI risk

“The clock is at a minute to midnight.”

That was the warning recently delivered by ASIC Commissioner Simone Constant as Australian regulators escalated concerns around AI-driven cyber risk, governance failures, and operational resilience.

The message from APRA and ASIC is becoming increasingly clear:

AI is accelerating operational and cyber risk faster than many organisations can adapt.

And regulators no longer view AI governance as a future compliance discussion.

It is rapidly becoming an operational resilience requirement.

AI is not creating entirely new risks — it is amplifying existing weaknesses

This is one of the most important nuances in the recent APRA and ASIC guidance.

Frontier AI models are not replacing traditional governance and cyber resilience fundamentals.

They are stress-testing them at unprecedented speed and scale.

ASIC specifically warned that frontier AI models are:

- lowering the barrier for sophisticated cyber attacks

- increasing attack speed and coordination

- accelerating vulnerability discovery and exploitation

- amplifying the consequences of weak operational controls

In practical terms, that means vulnerabilities that previously took weeks to exploit may now be exploited in hours.

Small governance gaps can rapidly cascade into operational failures.

And isolated weaknesses can now be correlated together by AI systems into highly effective attack pathways.

APRA’s strongest warning: governance is not keeping pace with AI adoption

APRA’s recent Letter to Industry on Artificial Intelligence highlighted something many enterprises already suspect internally:

AI adoption is accelerating far faster than governance maturity, operational controls, and assurance capability.

That observation matters.

Because most organisations still govern AI using models originally designed for:

- static systems

- predictable workflows

- periodic change cycles

- deterministic software environments

AI systems do not behave that way.

Models evolve.

Prompts change.

Agents gain capabilities.

Integrations expand.

Autonomous workflows emerge.

Risk shifts continuously.

Yet governance often remains:

- checklist-driven

- policy-centric

- periodic

- point-in-time

That mismatch is becoming one of the biggest operational risks in enterprise AI adoption.

AI governance and cyber resilience can no longer be treated separately

One of the clearest signals from APRA and ASIC is that AI governance is no longer just an ethics or compliance discussion.

AI systems now influence:

- customer interactions

- software engineering

- fraud detection

- lending workflows

- claims processing

- autonomous agents

- operational decision-making

- cybersecurity operations

As AI becomes embedded into critical business processes, governance failures can rapidly become cyber resilience failures.

This is why both regulators are increasingly framing AI governance through the lens of:

- operational resilience

- measurable controls

- continuous assurance

- accountability

- evidence-backed governance

The real governance gap is operationalisation

Most organisations already have:

- AI principles

- Responsible AI frameworks

- governance committees

- ethics statements

- procurement checklists

But far fewer can continuously demonstrate:

- where AI risk exists

- how controls operate in production

- whether controls remain effective over time

- how AI behaviour changes across the lifecycle

- how third-party AI dependencies are governed

- how evidence is generated for audits and regulators

That is the real governance gap regulators are now focusing on.

Not the existence of frameworks.

But the ability to operationalise them.

Regulators are moving beyond policy assertions

This is perhaps the biggest shift happening right now.

Historically, many governance programs relied heavily on:

- policy documentation

- periodic reviews

- process attestations

- committee reporting

But ASIC explicitly warned that governance should not rely solely on assurances.

Boards increasingly need:

- measurable evidence

- test results

- operational reporting

- audit findings

- independent validation

- demonstrable resilience outcomes

Similarly, APRA warned that traditional point-in-time assurance approaches are poorly suited for probabilistic AI systems that evolve and degrade over time.

The question is no longer:

“Do you have an AI governance framework?”

The question is increasingly:

“Can you continuously evidence that AI systems are operating within acceptable risk boundaries?”

That is a fundamentally different standard.

The rise of the AI evidence gap

This is where many organisations are beginning to struggle.

Most enterprises can:

- describe governance processes

- reference frameworks

- document policies

- articulate principles

But when asked:

“Show evidence this AI system behaves as expected in real-world conditions”

the answers are often incomplete.

This creates:

- regulatory friction

- slower audits

- procurement delays

- operational uncertainty

- increased board exposure

- weaker resilience outcomes

And regulators are starting to recognise this gap clearly.

Third-party AI and supply chain opacity are becoming major risks

APRA also highlighted growing concern around:

- opaque AI supply chains

- concentration risk

- embedded AI services

- fourth-party AI dependencies

- limited visibility into upstream model behaviour

This is becoming one of the least understood enterprise risks in AI adoption.

Many organisations now rely heavily on:

- foundation model providers

- AI copilots

- AI APIs

- embedded AI platforms

- autonomous workflow vendors

Yet few have mature operational visibility into:

- how those systems evolve

- how risk propagates

- how controls are validated

- how fallback mechanisms operate

- how concentration risk is managed

Traditional third-party governance models were not designed for adaptive AI ecosystems.

The FIIG case changed the conversation

ASIC’s enforcement action against FIIG Securities marked an important turning point.

The Federal Court imposed $2.5 million in penalties after failures in cyber resilience and operational controls exposed thousands of customers to risk.

That outcome matters because it transforms cyber and governance discussions from theoretical compliance concerns into real operational and financial consequences.

The message from regulators is increasingly direct:

Weak governance and resilience controls are no longer viewed as isolated technology issues.

They are becoming board-level accountability issues.

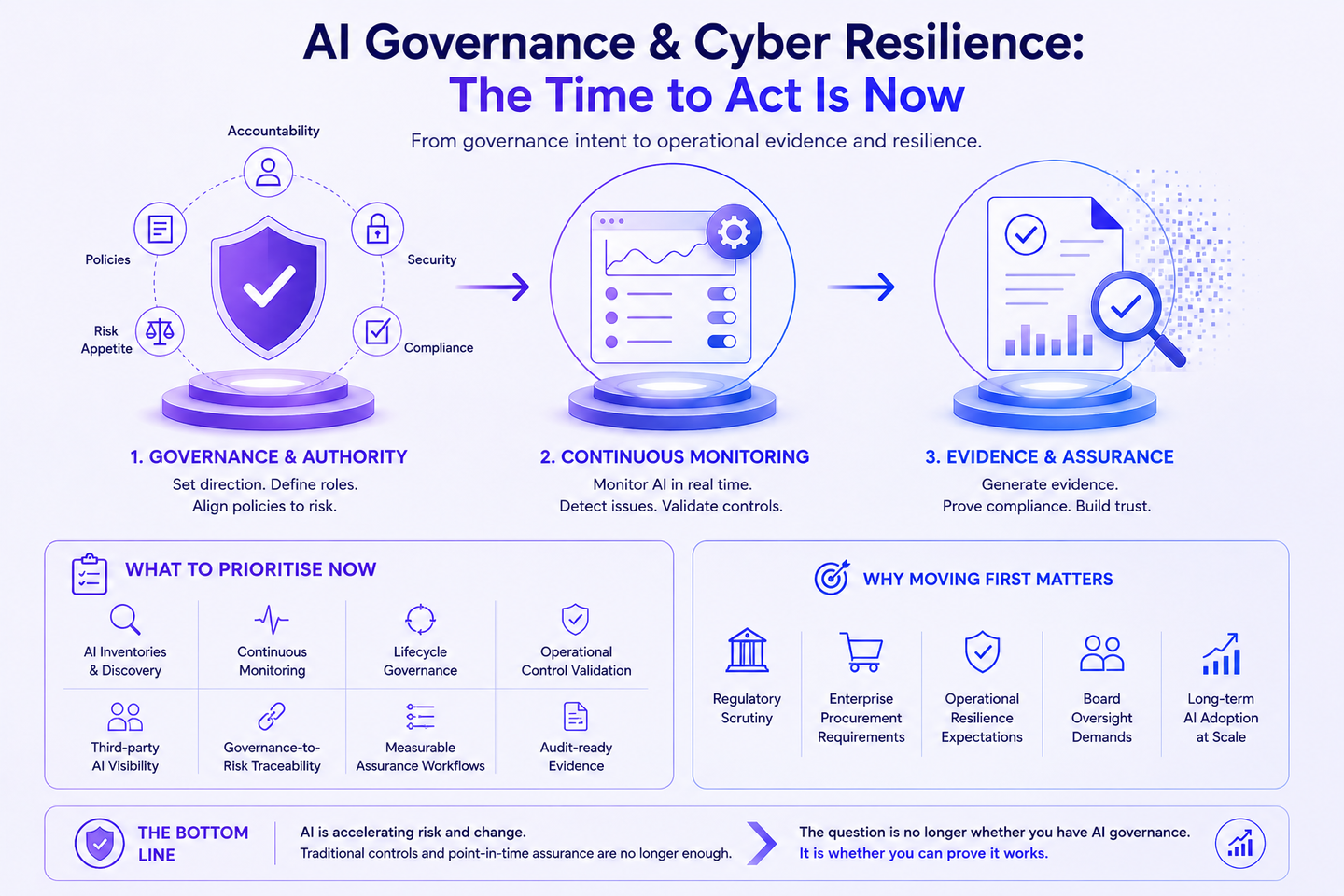

What organisations should prioritise now

The immediate challenge is not creating more governance bureaucracy.

It is building operational visibility and measurable assurance.

That means organisations increasingly need:

- AI inventories and discovery capabilities

- continuous AI monitoring

- lifecycle governance

- operational control validation

- third-party AI visibility

- governance-to-risk traceability

- measurable assurance workflows

- audit-ready evidence generation

The organisations moving first toward operational AI governance will likely be better positioned for:

- regulatory scrutiny

- enterprise procurement requirements

- operational resilience expectations

- board oversight demands

- long-term AI adoption at scale

Final thought

The direction from APRA and ASIC is becoming increasingly difficult to ignore.

AI governance can no longer operate as a static, policy-driven exercise.

The expectation is shifting toward:

- operational visibility

- measurable controls

- continuous monitoring

- evidence-backed assurance

- independent validation

- board-level accountability

The real question is no longer whether organisations have AI governance.

It is whether they can prove it works continuously in environments where AI systems evolve faster than traditional governance models were ever designed to handle.

APRA AI Letter:

APRA Letter to Industry on Artificial Intelligence (AI)

ASIC Open Letter:

ASIC Open Letter to AFS Licensees and Market Participants