Why spreadsheet-driven AI governance cannot scale in the era of continuous AI systems

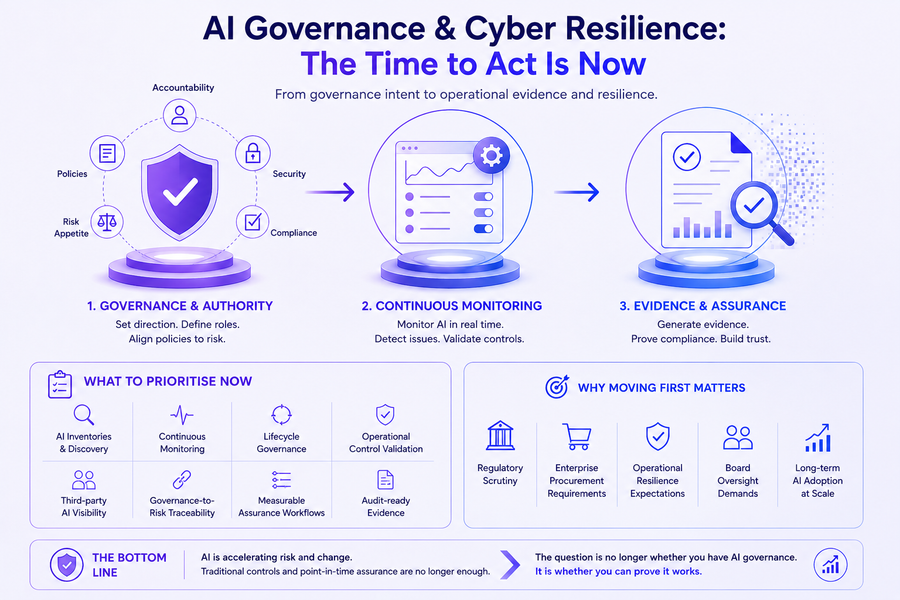

AI governance is entering the same phase cybersecurity entered a decade ago.

What began as policies, checklists, and annual reviews is rapidly becoming an operational discipline requiring continuous visibility, monitoring, and evidence.

That shift is forcing organizations to confront an uncomfortable reality:

Most ISO/IEC 42001 implementation work today is still manual.

Not because the standard requires it.

But because most governance programs were never designed for continuously evolving AI systems.

As enterprises deploy generative AI, autonomous agents, model orchestration pipelines, and rapidly changing ML workloads, governance teams are discovering that traditional compliance operating models simply do not scale.

The challenge is no longer understanding what ISO 42001 requires.

The challenge is operationalizing it.

And that changes everything.

The Real Problem Isn’t ISO 42001

Most organizations approaching ISO 42001 already understand the fundamentals:

- governance structures

- risk management

- lifecycle controls

- human oversight

- monitoring

- accountability

- continual improvement

The issue is not conceptual understanding.

The issue is execution.

In practice, many organizations still rely on:

- spreadsheets for AI inventories

- screenshots as audit evidence

- manual approval tracking

- disconnected policy repositories

- fragmented monitoring tools

- static governance reviews conducted quarterly or annually

That model worked reasonably well for traditional enterprise systems.

It breaks quickly in modern AI environments.

Especially when:

- models are retrained frequently

- prompts evolve daily

- datasets change continuously

- agents interact dynamically

- third-party AI services are introduced rapidly

- governance evidence becomes distributed across multiple platforms

AI systems are not static assets.

They are living operational systems.

Governance must evolve accordingly.

Why Traditional Governance Approaches Struggle

The biggest misconception in the market is that ISO 42001 is primarily a documentation exercise.

It is not.

At its core, the standard is pushing organizations toward operational accountability for AI systems.

That requires organizations to answer difficult questions continuously:

- What AI systems exist today?

- Who owns them?

- What risks are associated with them?

- What changed?

- What evidence supports oversight decisions?

- How are controls monitored operationally?

- What governance actions occurred at runtime?

Most organizations cannot answer these questions without significant manual effort.

And manual governance does not scale.

Not when AI deployment cycles move faster than traditional governance workflows.

The Governance Bottleneck Nobody Talks About

A large percentage of AI governance work today is operational overhead.

Not strategic governance.

Not risk analysis.

Not executive oversight.

Operational overhead.

Teams spend enormous amounts of time:

- gathering evidence

- updating inventories

- mapping controls

- compiling audit artifacts

- tracking approvals

- documenting reviews

- reconciling inconsistent records across systems

This creates what many governance teams quietly experience every day:

Governance fatigue.

The result is predictable:

- governance becomes reactive

- controls become stale

- visibility decreases

- audit preparation becomes chaotic

- accountability weakens

- AI adoption outpaces oversight capacity

Ironically, the faster organizations move with AI, the harder traditional governance models become to maintain.

The 80% That Should Be Automated

A significant portion of ISO 42001 operational work can—and increasingly should—be automated through integrated governance infrastructure.

Not because governance itself disappears.

But because evidence collection, visibility, and operational traceability should not depend on manual coordination.

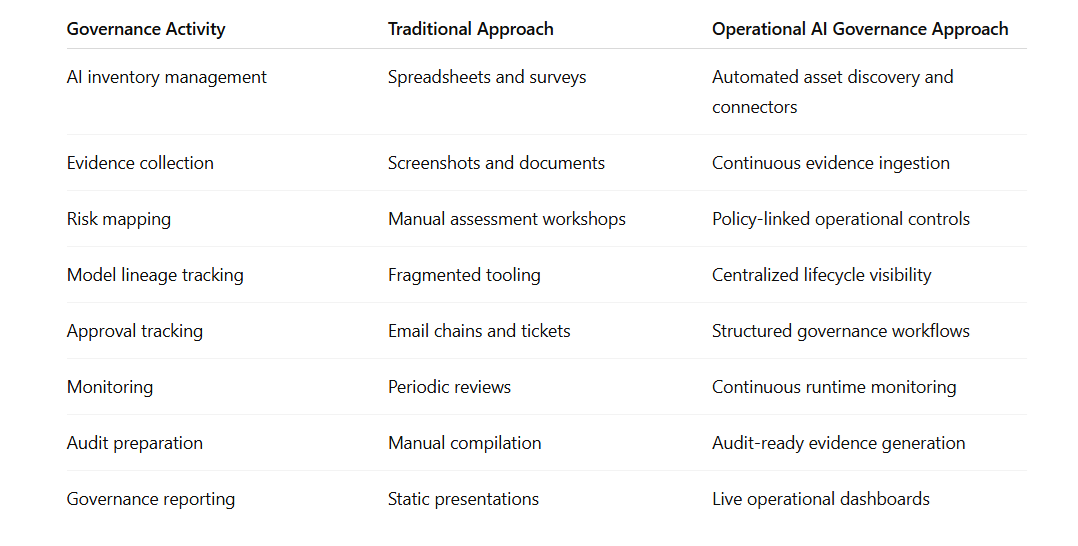

Here’s where the shift is happening:

This is not about replacing governance teams.

It is about enabling them to operate at the speed of AI systems.

Governance Is Becoming an Operational Discipline

The market is beginning to move beyond “AI policy management.”

A new operating model is emerging:

Operational AI Governance

This model treats governance as a continuous operational function embedded directly into AI delivery and oversight workflows.

Instead of relying solely on static reviews, organizations are increasingly moving toward:

- continuous monitoring

- runtime governance visibility

- lifecycle traceability

- evidence-driven oversight

- operational accountability

- continuous assurance models

This mirrors the evolution seen in cybersecurity:

- from annual assessments

- to continuous security operations

AI governance is now entering the same transition.

The Rise of Runtime Governance

One of the most important shifts happening right now is the movement from static governance toward runtime governance.

Historically, governance focused on:

- policies

- approvals

- pre-deployment reviews

But AI systems behave dynamically after deployment.

Which means governance must extend into runtime operations.

Organizations increasingly need visibility into:

- model behavior changes

- evaluation outcomes

- human oversight events

- deployment modifications

- operational anomalies

- execution authority boundaries

- decision lineage

This is where continuous AI assurance becomes strategically important.

Because governance is no longer just about proving that policies exist.

It is about proving that governance is operationally active.

Why This Matters for Audit Readiness

Many organizations still approach audit readiness as a point-in-time exercise.

But AI systems evolve continuously.

Auditors and enterprise buyers are increasingly looking for:

- operational traceability

- governance evidence

- lifecycle visibility

- monitoring records

- accountability mapping

- control effectiveness

Static documentation alone is becoming insufficient.

The organizations that scale AI governance effectively will likely be the ones that can generate operational evidence continuously—not manually assemble it every quarter.

This is a foundational shift.

The Emerging Governance Architecture

The future of AI governance is likely to look less like traditional compliance tooling and more like operational infrastructure.

That includes:

- AI system visibility layers

- governance telemetry

- runtime assurance controls

- continuous evidence pipelines

- operational risk monitoring

- centralized governance command centers

In this model, governance becomes embedded into the AI lifecycle itself.

Not separated from it.

This is where platforms like CognitiveView are helping shape the market conversation—not as documentation repositories, but as infrastructure for operational AI governance and continuous assurance.

The distinction matters.

Because organizations are no longer asking:

“Do we have governance policies?”

They are increasingly asking:

“Can we operationalize governance continuously across rapidly changing AI systems?”

Those are fundamentally different questions.

The Future of ISO 42001 Will Be Operational

ISO 42001 is arriving at a critical moment.

AI adoption is accelerating faster than traditional governance operating models can support.

The organizations that succeed will not necessarily be the ones with the largest policy libraries.

They will be the ones that can:

- operationalize controls

- maintain continuous visibility

- automate governance evidence

- scale accountability

- integrate governance into delivery workflows

- support ongoing assurance at runtime

In other words:

The future of AI governance is not static compliance.

It is operational assurance.

And that future will require far more automation than most organizations are prepared for today.