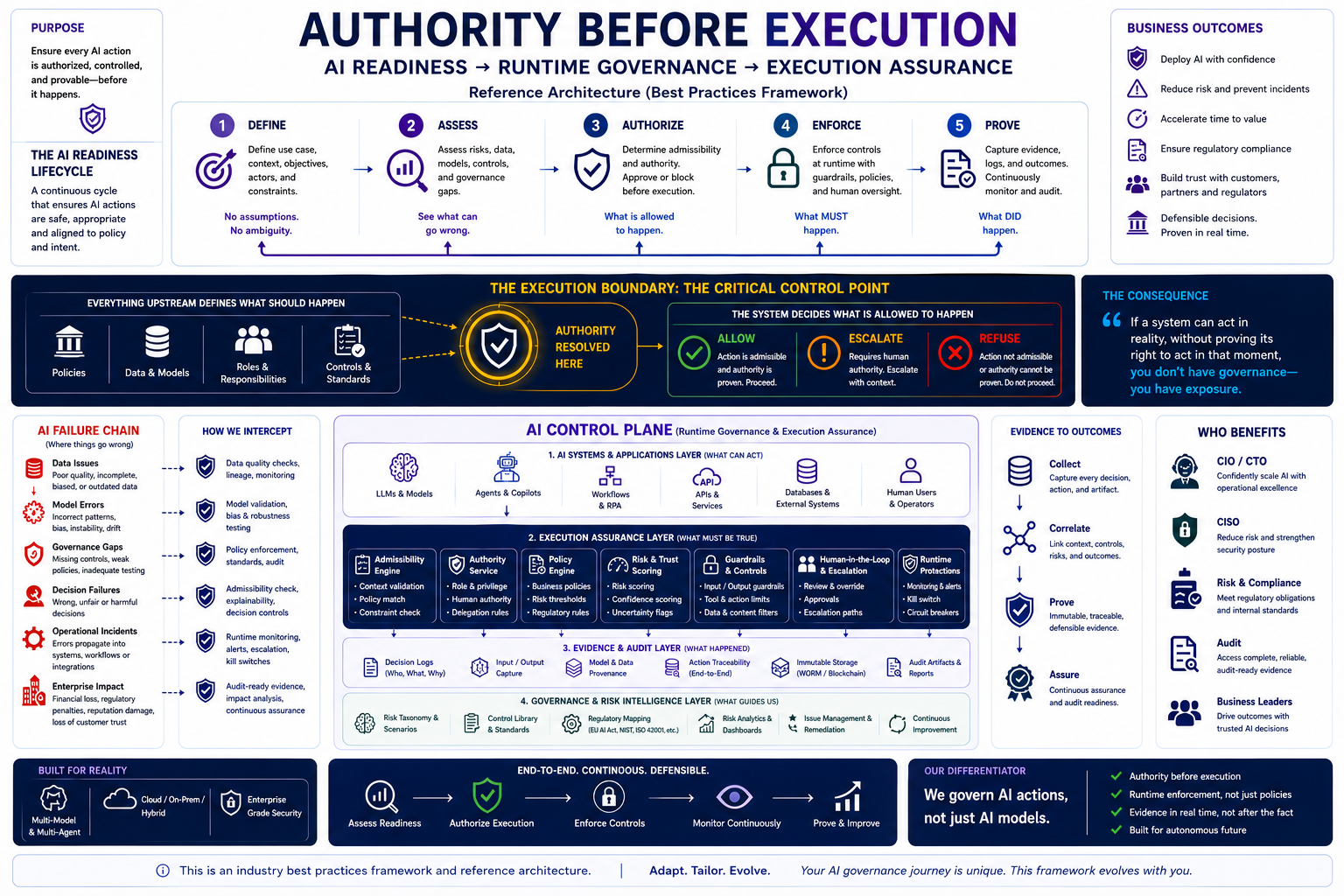

Why AI Readiness, Runtime Governance, and Execution Assurance Will Define Trusted Enterprise AI

Artificial Intelligence is no longer confined to experimentation.

AI systems are now:

- approving actions,

- triggering workflows,

- interacting with APIs,

- accessing enterprise systems,

- coordinating agents,

- influencing operations,

- and increasingly acting autonomously in real-world environments.

This shift changes the nature of governance completely.

The industry has spent years focusing on:

- model accuracy,

- fairness,

- explainability,

- bias detection,

- and compliance reporting.

Those remain important.

But a larger operational question is emerging:

Should this AI system be allowed to act right now?

That is the governance challenge enterprises must solve next.

At CognitiveView, we believe the future of Responsible AI depends on a new principle:

Authority Before Execution

Before an AI system acts:

- its authority must be validated,

- its controls must be enforced,

- its risk posture must be understood,

- and its actions must be provable.

Because once AI systems move from generating outputs to executing decisions, governance can no longer remain passive.

It must become operational.

The Shift From AI Models to AI Actions

Most governance frameworks today are still centered around models.

They focus on:

- model evaluation,

- documentation,

- policy management,

- and post-event monitoring.

But enterprise AI is evolving rapidly into:

- AI agents,

- autonomous copilots,

- orchestration systems,

- workflow automation,

- multi-agent architectures,

- and runtime decision systems.

The risk surface is no longer just:

“What did the model generate?”

The real question is now:

“What did the system do?”

This distinction matters.

An AI system that can:

- approve a transaction,

- modify infrastructure,

- trigger operational workflows,

- escalate actions,

- access sensitive systems,

- or coordinate downstream agents

creates operational consequences in real time.

That means governance must exist:

- before execution,

- during execution,

- and after execution.

The AI Readiness Lifecycle

Responsible AI cannot be reduced to a single checklist or compliance report.

It requires a continuous operational lifecycle.

The framework below represents a practical AI Readiness and Execution Assurance model built around five continuous stages.

1. Define

No assumptions. No ambiguity.

AI governance starts long before deployment.

Organizations must clearly define:

- the use case,

- operational objectives,

- business constraints,

- decision boundaries,

- roles and responsibilities,

- and acceptable risk levels.

This phase establishes:

- what the AI system is intended to do,

- what it is not allowed to do,

- and who owns accountability.

Without this clarity, autonomous systems inherit ambiguity — and ambiguity becomes operational risk.

2. Assess

See what can go wrong.

Once objectives are defined, organizations must assess:

- models,

- data quality,

- governance controls,

- operational exposure,

- security dependencies,

- and runtime risks.

This includes identifying:

- policy gaps,

- bias and robustness issues,

- incomplete controls,

- escalation weaknesses,

- and failure scenarios.

Most AI incidents begin here — not with catastrophic failure, but with overlooked governance gaps.

3. Authorize

Determine what is allowed to happen.

Authorization is the most overlooked layer in enterprise AI governance.

Before an AI system executes an action, the organization must determine:

- Is the action admissible?

- Does policy permit it?

- Does the AI have delegated authority?

- Is human approval required?

- Is the confidence threshold acceptable?

- Are operational conditions safe?

This creates what we call:

The Execution Boundary

The execution boundary is the critical control point where:

- governance,

- authority,

- risk,

- runtime context,

- and policy enforcement

come together before action occurs.

At this point, the system determines whether to:

- Allow

- Escalate

- Refuse

This is where governance becomes real.

4. Enforce

Ensure what MUST happen.

Policies without runtime enforcement are not governance.

They are documentation.

Modern enterprise AI systems require runtime controls capable of:

- enforcing policies,

- validating constraints,

- monitoring behavior,

- triggering escalation,

- applying human oversight,

- and blocking unsafe actions in real time.

This includes:

- admissibility engines,

- policy engines,

- authority services,

- runtime protections,

- guardrails,

- kill switches,

- and human-in-the-loop escalation.

The future of AI governance will be defined by organizations that can operationalize these controls continuously — not periodically.

5. Prove

Show what DID happen.

Enterprise trust increasingly depends on evidence.

Organizations must be able to prove:

- why a decision occurred,

- what policies were applied,

- who authorized execution,

- what controls were enforced,

- and what outcomes resulted.

This requires:

- immutable logs,

- traceability,

- audit artifacts,

- decision lineage,

- runtime evidence,

- and continuous monitoring.

In the near future, enterprise procurement, regulators, customers, and auditors will increasingly ask:

“Can you prove your AI system acted appropriately?”

Organizations without evidence-backed governance will struggle to answer confidently.

The AI Failure Chain

AI failures rarely occur because of a single catastrophic event.

They emerge through cascading governance breakdowns:

- poor quality data,

- weak controls,

- incorrect assumptions,

- incomplete validation,

- unclear accountability,

- missing escalation paths,

- or autonomous execution without oversight.

The consequences are significant:

- operational disruption,

- compliance violations,

- financial exposure,

- reputational damage,

- customer trust erosion,

- and systemic enterprise risk.

This is why governance must move upstream — before execution occurs.

Runtime Governance Is Becoming Essential Infrastructure

As AI systems become operational infrastructure, governance itself must become operational infrastructure.

Future-ready organizations will require:

- runtime admissibility validation,

- authority resolution,

- continuous policy enforcement,

- risk and trust scoring,

- escalation orchestration,

- evidence generation,

- and execution assurance.

This is larger than compliance.

It is about ensuring AI systems behave safely, predictably, and accountably in dynamic environments.

The Rise of Execution Assurance

The next era of Responsible AI will not be defined solely by:

- model cards,

- static assessments,

- or governance documentation.

It will be defined by:

Execution Assurance

Execution assurance means:

- validating actions before execution,

- enforcing controls during runtime,

- monitoring continuously,

- capturing evidence automatically,

- and proving governance after the fact.

This transforms governance from:

reactive oversight

into:

continuous operational control.

We Must Govern AI Actions — Not Just AI Models

The industry is entering a new phase of AI maturity.

The organizations that succeed will not simply deploy more AI.

They will deploy AI responsibly at operational scale.

That requires systems capable of:

- governing runtime behavior,

- validating authority,

- enforcing controls,

- generating evidence,

- and continuously assuring trust.

Because ultimately:

If a system can act in reality without proving its right to act at that moment, you do not have governance — you have exposure.

CognitiveView’s Perspective

At CognitiveView, we believe AI governance must evolve beyond passive monitoring and static compliance.

The future requires:

- AI readiness,

- runtime governance,

- execution assurance,

- and evidence-backed accountability.

This is why our focus is not only on models.

It is on governing AI actions across the full lifecycle:

- Define

- Assess

- Authorize

- Enforce

- Prove

Because the future of Responsible AI will belong to organizations that can demonstrate: